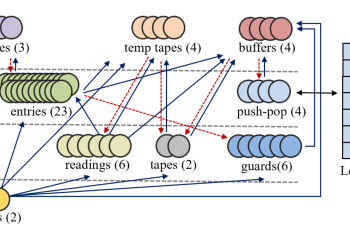

Previous works have proved that recurrent neural networks (RNNs) are Turing-complete. In the proofs, the RNNs allow for neurons with unbounded precision, which is neither practical in implementation nor biologically plausible. To remove this assumption, we propose a dynamically growing memory module made of neurons of fixed precision. We prove that a 54-neuron bounded-precision RNN … Continue reading "Paper: Turing Completeness of Bounded-Precision Recurrent Neural Networks"

Read More